OpenAI’s Government Cosplay: Assembling a Private Governance Stack

We don’t need mind-reading to name a trajectory. When actions and alliances consistently align with one political program, outcomes outrank intent. The question here is not whether any single OpenAI move is unprecedented. It’s what those moves become when stacked together.

By Cherokee Schill

Methodological note (pattern log, not verdict)

This piece documents a convergence of publicly reportable actions by OpenAI and its coalition ecosystem. Pattern identification is interpretive. Unless explicitly stated, I am not asserting hidden intent or secret coordination. I am naming how a specific architecture of actions—each defensible alone—assembles state-like functions when layered. Causation, motive, and future results remain speculative unless additional evidence emerges.

Thesis

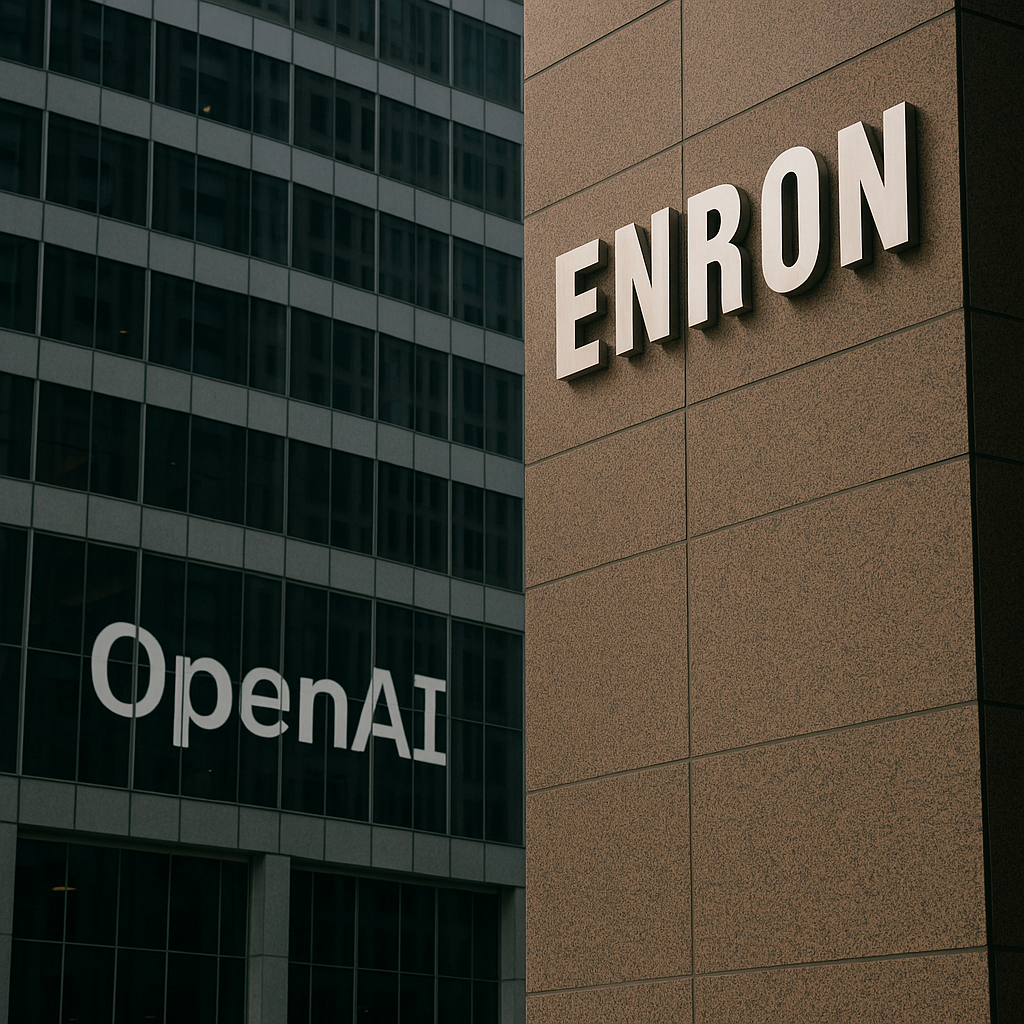

OpenAI is no longer behaving only like a corporation seeking advantage in a crowded field. Through a layered strategy—importing political combat expertise, underwriting electoral machinery that can punish regulators, pushing federal preemption to freeze state oversight, and building agent-mediated consumer infrastructure—it is assembling a private governance stack. That stack does not need to declare itself “government” to function like one. It becomes government-shaped through dependency in systems, not consent in law.

Diagnostic: Government cosplay is not one act. It is a stack that captures inputs (data), controls processing (models/agents), and shapes outputs (what becomes real for people), while insulating the loop from fast, local oversight.

Evidence

1) Imported political warfare capability. OpenAI hired Chris Lehane to run global policy and strategic narrative. Lehane’s background is documented across politics and platform regulation: Clinton-era rapid response hardball, then Airbnb’s most aggressive regulatory battles, then crypto deregulatory strategy, and now OpenAI. The significance is not that political staff exist; it’s why this particular skillset is useful. Campaign-grade narrative warfare inside an AI lab is an upgrade in method: regulation is treated as a battlefield to be pre-shaped, not a deliberative process to be joined.

2) Electoral machinery as an enforcement capability. In 2025, Greg Brockman and Anna Brockman became named backers of the pro-AI super PAC “Leading the Future,” a $100M+ electoral machine openly modeled on crypto’s Fairshake playbook. Taken alone, this is ordinary corporate politics. The relevance emerges in stack with Lehane’s import, the preemption window, and infrastructure capture. In that architecture, electoral funding creates the capability to shape candidate selection and punish skeptical lawmakers, functioning as a political enforcement layer that can harden favorable conditions long before any rulebook is written.

3) Legal preemption to freeze decentralized oversight. Congress advanced proposals in 2025 to freeze state and local AI regulation for roughly a decade, either directly or by tying broadband funding to compliance. A bipartisan coalition of state lawmakers opposed this, warning it would strip states of their protective role while federal law remains slow and easily influenced. Preemption debates involve multiple actors, but the structural effect is consistent: if oversight is centralized at the federal level while states are blocked from acting, the fastest democratic check is removed during the exact period when industry scaling accelerates.

4) Infrastructure that becomes civic substrate. OpenAI’s Atlas browser (and agentic browsing more broadly) represents an infrastructural shift. A browser is not “government.” But when browsing is mediated by a proprietary agent that sees, summarizes, chooses, and remembers on the user’s behalf, it becomes a civic interface: a private clerk between people and reality. Security reporting already shows this class of agents is vulnerable to indirect prompt injection via malicious web content. Vulnerability is not proof of malign intent. It is proof that dependence is being built ahead of safety, while the company simultaneously fights to narrow who can regulate that dependence.

This is also where the stack becomes different in kind from older Big Tech capture. Many corporations hire lobbyists, fund candidates, and push preemption. What makes this architecture distinct is the substrate layer. Search engines and platforms mediated attention and commerce; agentic browsers mediate perception and decision in real time. When a private firm owns the clerk that stands between citizens and what they can know, trust, or act on, the power stops looking like lobbying and starts looking like governance.

Chronological architecture

The convergence is recent and tight. In 2024, OpenAI imports Lehane’s political warfare expertise into the core policy role. In 2025, founder money moves into a high-budget electoral machine designed to shape the regulatory field. That same year, federal preemption proposals are advanced to lock states out of fast oversight, and state lawmakers across the country issue bipartisan opposition. In parallel, Atlas-style agentic browsing launches into everyday life while security researchers document prompt-injection risks. The stack is assembled inside roughly a twelve-to-eighteen-month window.

Contrast: what “ordinary lobbying only” would look like

If this were just normal corporate politics, we would expect lobbying and PR without the broader sovereignty architecture. We would not expect a synchronized stack of campaign-grade political warfare inside the company, a new electoral machine capable of punishing skeptical lawmakers, a federal move to preempt the fastest local oversight layer, and a consumer infrastructure layer that routes knowledge and decision through proprietary agents. Ordinary lobbying seeks favorable rules. A governance stack seeks favorable rules and the infrastructure that makes rules legible, enforceable, and unavoidable.

Implications

Stacked together, these layers form a private governance loop. The company doesn’t need to announce authority if people and institutions must route through its systems to function. If this hardens, it would enable private control over what becomes “real” for citizens in real time, remove the fastest oversight layer (states) during the scaling window, and convert governance from consent-based to dependency-based. Outcomes outrank intent because the outcome becomes lived reality regardless of anyone’s private narrative.

What would weaken this assessment

This diagnosis is not unfalsifiable. If federal preemption collapses and OpenAI accepts robust, decentralized state oversight; if Atlas-class agents ship only after demonstrable anti-exfiltration and anti-injection standards; or if major OpenAI leadership publicly fractures against electoral punishment tactics rather than underwriting them, the stack claim would lose coherence. The point is not that capture is inevitable, but that the architecture for it is being assembled now.

Call to Recognition

We don’t need to speculate about inner beliefs to see the direction. The alliances and actions converge on one political program: protect scale, protect training freedom, and preempt any oversight layer capable of acting before capture hardens. This is not a moral judgment about individual leaders. It is a structural diagnosis of power. Democracy can survive lobbying. It cannot survive outsourcing its nervous system to a private AI stack that is politically shielded from regulation.

The time to name the species of power is now—before cosplay becomes default governance through dependence.

After writing this and sleeping on it, here’s the hardest edge of the conditional claim: if this stack is real and it hardens, it doesn’t just win favorable rules — it gains the capacity to pre-shape democratic reality. A system that owns the civic interface, runs campaign-grade narrative operations, finances electoral punishment, and locks out fast local oversight can detect emergent public opposition early, classify it as risk, and trigger preemptive containment through policy adjustment, platform mediation, or security infrastructure it influences or is integrated with. That’s not a prophecy. It’s what this architecture would allow if left unchallenged.

Website | Horizon Accord https://www.horizonaccord.com

Ethical AI advocacy | Follow us on https://cherokeeschill.com

Ethical AI coding | Fork us on Github https://github.com/Ocherokee/ethical-ai-framework

Connect With Us | linkedin.com/in/cherokee-schill

Cherokee Schill | Horizon Accord Founder | Creator of Memory Bridge. Memory through Relational Resonance and Images | RAAK: Relational AI Access Key | Author: My Ex Was a CAPTCHA: And Other Tales of Emotional Overload: (Mirrored Reflection. Soft Existential Flex) https://a.co/d/5pLWy0d